目录

一、pgpool-II概述

核心功能

架构模式

二、环境信息

三、部署postgresql数据库

下载yum仓库与依赖包

安装postgresql

创建归档目录

初始化主数据库

配置文件postgresql.conf修改

创建数据库用户

配置pg_hba.conf

操作系统配置免密

repl复制用户免密

四、部署pgpool-II

安装依赖包

排除pg仓库的pgpool包

安装pgpool-II

创建pgpool_node_id

pgpool.conf配置文件详解

pgpool.conf配置文件示例

pgpool-II启动与关闭

五、创建测试表

六、pgpool-II使用

pcp_recovery_node 创建备份服务器

负载均衡测试

Failover故障转移

在线恢复

七、问题解决

一、pgpool-II概述

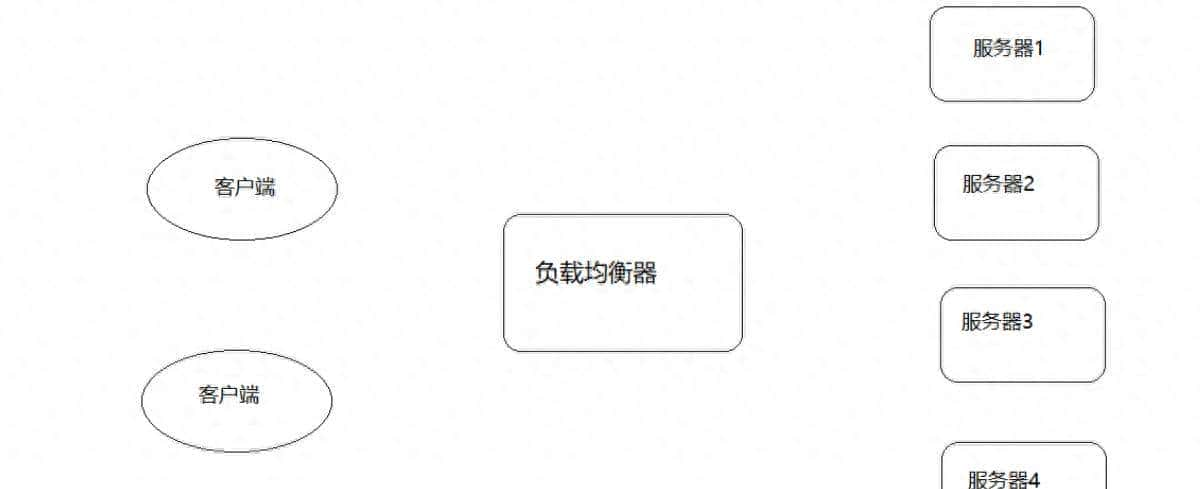

pgpool-II 是一个用于 PostgreSQL 数据库的中间件工具,提供连接池、负载均衡、自动故障转移和高可用性等功能。它充当客户端和 PostgreSQL 服务器之间的代理,优化数据库性能并增强可靠性。

核心功能

连接池

pgpool-II 维护一个数据库连接池,复用客户端连接以减少频繁建立和断开连接的开销,适合高并发场景。

负载均衡

在多个 PostgreSQL 服务器间分发读查询(SELECT 语句),基于配置的权重分配请求,提升整体吞吐量。写操作(INSERT/UPDATE/DELETE)默认发送到主节点。

自动故障转移

结合流复制或逻辑复制,当主节点故障时,pgpool-II 可自动提升备节点为新主节点,确保服务连续性。需配合 watchdog 模块实现自身高可用。

查询缓存

可选功能,缓存常用查询结果,减少重复计算和数据库负载,适用于读多写少的场景。

架构模式

主从模式(Streaming Replication)

pgpool-II 与 PostgreSQL 的流复制结合,实现读写分离和故障转移。主节点处理写操作,从节点处理读操作。

并行查询模式

通过数据分片(Sharding)将查询分发到多个节点并行执行,适合大规模数据分析。需配合 PostgreSQL 的外表功能(Foreign Data Wrapper)。

二、环境信息

postgresql环境信息

| 序号 | 服务器IP地址 | 服务器主机名 | 数据库版本 | 节点类型 | 操作系统版本 | 备注 |

| 1 | 192.168.1.62 | pg62 | 15.15 | postgresql | redhat 7.6 | |

| 2 | 192.168.1.63 | pg63 | 15.15 | postgresql | redhat 7.6 | |

| 3 | 192.168.1.64 | pg64 | 15.15 | postgresql | redhat 7.6 | |

| 4 | 192.168.1.65 | / | / | / | / | vip地址 |

pgpool-II环境信息

| 序号 | 服务器IP地址 | 服务器主机名 | pgpool版本 | 节点类型 | 操作系统版本 | 备注 |

| 1 | 192.168.1.62 | pg62 | 4.4.7 | pgpool | redhat 7.6 | |

| 2 | 192.168.1.63 | pg63 | 4.4.7 | pgpool | redhat 7.6 | |

| 3 | 192.168.1.64 | pg64 | 4.4.7 | pgpool | redhat 7.6 |

[root@pg64 pgpool-II]# pgpool –version

pgpool-II version 4.4.7 (nurikoboshi)

三、部署postgresql数据库

# pg62、pg63、pg64服务器执行操作。yum 默认安装postgresql在这个目录下:/var/lib/pgsql

下载yum仓库与依赖包

# postgresql yum仓库

yum install -y https://download.postgresql.org/pub/repos/yum/reporpms/EL-7-x86_64/pgdg-redhat-repo-latest.noarch.rpm

# libzstd依赖包下载安装

wget https://archives.fedoraproject.org/pub/archive/epel/7/x86_64/Packages/l/libzstd-1.5.5-1.el7.x86_64.rpm

wget https://archives.fedoraproject.org/pub/archive/epel/7/x86_64/Packages/l/libzstd-devel-1.5.5-1.el7.x86_64.rpm

rpm -ivh libzstd-1.5.5-1.el7.x86_64.rpm libzstd-devel-1.5.5-1.el7.x86_64.rpm安装postgresql

[root@pg62 yum.repos.d]# yum install -y postgresql15-server

Loaded plugins: langpacks, product-id, search-disabled-repos, subscription-manager

This system is not registered with an entitlement server. You can use subscription-manager to register.

Resolving Dependencies

--> Running transaction check

---> Package postgresql15-server.x86_64 0:15.15-4PGDG.rhel7 will be installed

--> Processing Dependency: postgresql15-libs(x86-64) = 15.15-4PGDG.rhel7 for package: postgresql15-server-15.15-4PGDG.rhel7.x86_64

--> Processing Dependency: postgresql15(x86-64) = 15.15-4PGDG.rhel7 for package: postgresql15-server-15.15-4PGDG.rhel7.x86_64

--> Processing Dependency: libpq.so.5()(64bit) for package: postgresql15-server-15.15-4PGDG.rhel7.x86_64

--> Running transaction check

---> Package postgresql15.x86_64 0:15.15-4PGDG.rhel7 will be installed

---> Package postgresql15-libs.x86_64 0:15.15-4PGDG.rhel7 will be installed

--> Finished Dependency Resolution

Dependencies Resolved

=============================================================================================================================================================

Package Arch Version Repository Size

=============================================================================================================================================================

Installing:

postgresql15-server x86_64 15.15-4PGDG.rhel7 pgdg15 5.9 M

Installing for dependencies:

postgresql15 x86_64 15.15-4PGDG.rhel7 pgdg15 1.6 M

postgresql15-libs x86_64 15.15-4PGDG.rhel7 pgdg15 290 k

Transaction Summary

=============================================================================================================================================================

Install 1 Package (+2 Dependent packages)

Total size: 7.8 M

Total download size: 5.9 M

Installed size: 34 M

Downloading packages:

No Presto metadata available for pgdg15

warning: /var/cache/yum/x86_64/7Server/pgdg15/packages/postgresql15-server-15.15-4PGDG.rhel7.x86_64.rpm: Header V4 RSA/SHA1 Signature, key ID 73e3b907: NOKEY

Public key for postgresql15-server-15.15-4PGDG.rhel7.x86_64.rpm is not installed

postgresql15-server-15.15-4PGDG.rhel7.x86_64.rpm | 5.9 MB 00:00:29

Retrieving key from file:///etc/pki/rpm-gpg/PGDG-RPM-GPG-KEY-RHEL7

Importing GPG key 0x73E3B907:

Userid : "PostgreSQL RPM Repository <pgsql-pkg-yum@lists.postgresql.org>"

Fingerprint: f245 f0bf 96ac 1827 44ca ff2e 64fa ce11 73e3 b907

Package : pgdg-redhat-repo-42.0-38PGDG.noarch (installed)

From : /etc/pki/rpm-gpg/PGDG-RPM-GPG-KEY-RHEL7

Running transaction check

Running transaction test

Transaction test succeeded

Running transaction

Warning: RPMDB altered outside of yum.

Installing : postgresql15-libs-15.15-4PGDG.rhel7.x86_64 1/3

Installing : postgresql15-15.15-4PGDG.rhel7.x86_64 2/3

Installing : postgresql15-server-15.15-4PGDG.rhel7.x86_64 3/3

Verifying : postgresql15-server-15.15-4PGDG.rhel7.x86_64 1/3

Verifying : postgresql15-15.15-4PGDG.rhel7.x86_64 2/3

Verifying : postgresql15-libs-15.15-4PGDG.rhel7.x86_64 3/3

Installed:

postgresql15-server.x86_64 0:15.15-4PGDG.rhel7

Dependency Installed:

postgresql15.x86_64 0:15.15-4PGDG.rhel7 postgresql15-libs.x86_64 0:15.15-4PGDG.rhel7

Complete!

[root@pg62 yum.repos.d]# 创建归档目录

#创建归档目录,pg62、pg63、pg64服务器执行

[all servers]# su - postgres

[all servers]$ mkdir /var/lib/pgsql/archivedir初始化主数据库

[pg62]# su – postgres

[pg62]$ /usr/pgsql-15/bin/initdb -D $PGDATA

#初始化主数据库,pg62节点操作

su - postgres

-bash-4.2$ /usr/pgsql-15/bin/initdb -D $PGDATA

The files belonging to this database system will be owned by user "postgres".

This user must also own the server process.

The database cluster will be initialized with locale "en_US.UTF-8".

The default database encoding has accordingly been set to "UTF8".

The default text search configuration will be set to "english".

Data page checksums are disabled.

fixing permissions on existing directory /var/lib/pgsql/15/data ... ok

creating subdirectories ... ok

selecting dynamic shared memory implementation ... posix

selecting default max_connections ... 100

selecting default shared_buffers ... 128MB

selecting default time zone ... Asia/Shanghai

creating configuration files ... ok

running bootstrap script ... ok

performing post-bootstrap initialization ... ok

syncing data to disk ... ok

initdb: warning: enabling "trust" authentication for local connections

initdb: hint: You can change this by editing pg_hba.conf or using the option -A, or --auth-local and --auth-host, the next time you run initdb.

Success. You can now start the database server using:

/usr/pgsql-15/bin/pg_ctl -D /var/lib/pgsql/15/data -l logfile start

-bash-4.2$ 配置文件postgresql.conf修改

#主服务器pg62编辑配置文件修改参数

vi $PGDATA/postgresql.conf

listen_addresses = '*'

archive_mode = on

archive_command = 'cp "%p" "/var/lib/pgsql/archivedir/%f"'

max_wal_senders = 10

max_replication_slots = 10

wal_level = replica

hot_standby = on

wal_log_hints = on创建数据库用户

# 创建用户命令

psql -U postgres -p 5432

postgres=# SET password_encryption = 'scram-sha-256';

postgres=# CREATE ROLE pgpool WITH LOGIN;

postgres=# CREATE ROLE repl WITH REPLICATION LOGIN;

postgres=# password pgpool

postgres=# password repl

postgres=# password postgres

postgres=# GRANT pg_monitor TO pgpool;

--执行过程

-bash-4.2$ /usr/pgsql-15/bin/pg_ctl -D /var/lib/pgsql/15/data -l logfile start

waiting for server to start.... done

server started

-bash-4.2$

-bash-4.2$

-bash-4.2$ psql -U postgres -p 5432

psql (15.15)

Type "help" for help.

postgres=# SET password_encryption = 'scram-sha-256';

SET

postgres=# CREATE ROLE pgpool WITH LOGIN;

CREATE ROLE

postgres=# CREATE ROLE repl WITH REPLICATION LOGIN;

CREATE ROLE

postgres=# password pgpool

Enter new password for user "pgpool":

Enter it again:

postgres=# password repl

Enter new password for user "repl":

Enter it again:

postgres=# password postgres

Enter new password for user "postgres":

Enter it again:

postgres=# GRANT pg_monitor TO pgpool;

GRANT ROLE

postgres=# 配置pg_hba.conf

vi /var/lib/pgsql/15/data/pg_hba.conf

# TYPE DATABASE USER ADDRESS METHOD

# "local" is for Unix domain socket connections only

local all all trust

# IPv4 local connections:

host all all 127.0.0.1/32 trust

host all all 192.168.1.0/24 scram-sha-256

# IPv6 local connections:

host all all ::1/128 trust

# Allow replication connections from localhost, by a user with the

# replication privilege.

local replication all trust

host replication all 127.0.0.1/32 trust

host replication all ::1/128 trust

host replication all 192.168.1.0/24 scram-sha-256操作系统配置免密

要使用 Pgpool-II 的自动故障转移和在线恢复功能,需要允许所有后端服务器以 Pgpool-II 启动用户(默认为 postgres。Pgpool-II 4.0 或更早版本,默认为 root)和 PostgreSQL 启动用户(默认为 postgres)的身份进行 SSH 公钥认证(无密码 SSH 登录)。

# 配置主机名称解析

echo "192.168.1.62 pg62" >> /etc/hosts

echo "192.168.1.63 pg63" >> /etc/hosts

echo "192.168.1.64 pg64" >> /etc/hostsroot用户免密配置过程

--root

[pg62、pg63、pg64]# mkdir ~/.ssh

[pg62、pg63、pg64]# chmod 700 ~/.ssh

[pg62、pg63、pg64]# cd ~/.ssh

[pg62、pg63、pg64]# ssh-keygen -t rsa -f id_rsa_pgpool

[pg62、pg63、pg64]# ssh-copy-id -i id_rsa_pgpool.pub postgres@server1

[pg62、pg63、pg64]# ssh-copy-id -i id_rsa_pgpool.pub postgres@server2

[pg62、pg63、pg64]# ssh-copy-id -i id_rsa_pgpool.pub postgres@server3

-- 执行过程

[root@pg62 .ssh]# ssh-keygen -t rsa -f id_rsa_pgpool

Generating public/private rsa key pair.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in id_rsa_pgpool.

Your public key has been saved in id_rsa_pgpool.pub.

The key fingerprint is:

SHA256:l3qQ3BH5ZR1R+Iq3ciRA9LvhBMUTn/4MiGrQiv5YaP4 root@pg62

The key's randomart image is:

+---[RSA 2048]----+

| .ooo. ==|

| o+o.+..|

| .o..+o. |

| o o.=.+ .|

| . S =.=.o. |

| o o = ooo++ |

| + o + . o+ .o|

| + o . . . o |

| +oE o |

+----[SHA256]-----+

[root@pg62 .ssh]#

--根据提示输入密码

ssh-copy-id -i id_rsa_pgpool.pub postgres@pg62

ssh-copy-id -i id_rsa_pgpool.pub postgres@pg63

ssh-copy-id -i id_rsa_pgpool.pub postgres@pg64--验证

[root@pg62 .ssh]# ssh postgres@pg62 -i ~/.ssh/id_rsa_pgpool

Last login: Wed Dec 10 16:37:31 2025 from pg62

-bash-4.2$ exit

logout

Connection to pg62 closed.

[root@pg62 .ssh]# ssh postgres@pg63 -i ~/.ssh/id_rsa_pgpool

Last login: Wed Dec 10 16:30:31 2025

-bash-4.2$ exit

logout

Connection to pg63 closed.

[root@pg62 .ssh]# ssh postgres@pg64 -i ~/.ssh/id_rsa_pgpool

Last login: Wed Dec 10 16:30:31 2025

-bash-4.2$ exit

logout

Connection to pg64 closed.postgres用户免密配置过程

# postgres用户配置免密

[pg62、pg63、pg64]# su - postgres

[pg62、pg63、pg64]$ mkdir ~/.ssh

[pg62、pg63、pg64]$ chmod 700 ~/.ssh

[pg62、pg63、pg64]$ cd ~/.ssh

[pg62、pg63、pg64]$ ssh-keygen -t rsa -f id_rsa_pgpool

[pg62、pg63、pg64]$ ssh-copy-id -i id_rsa_pgpool.pub postgres@pg62

[pg62、pg63、pg64]$ ssh-copy-id -i id_rsa_pgpool.pub postgres@pg63

[pg62、pg63、pg64]$ ssh-copy-id -i id_rsa_pgpool.pub postgres@pg64

--验证

ssh postgres@serverX -i ~/.ssh/id_rsa_pgpool

[root@pg62 .ssh]# su - postgres

Last login: Wed Dec 10 16:41:47 CST 2025 from pg64 on pts/1

-bash-4.2$ ssh postgres@pg62 -i ~/.ssh/id_rsa_pgpool

Last login: Wed Dec 10 16:42:15 2025

-bash-4.2$ hostname

pg62

-bash-4.2$ exit

logout

Connection to pg62 closed.

-bash-4.2$ ssh postgres@pg63 -i ~/.ssh/id_rsa_pgpool

Last login: Wed Dec 10 16:41:56 2025 from pg64

-bash-4.2$ hostname

pg63

-bash-4.2$ exit

logout

Connection to pg63 closed.

-bash-4.2$ ssh postgres@pg64 -i ~/.ssh/id_rsa_pgpool

Last login: Wed Dec 10 16:42:06 2025 from pg64

-bash-4.2$ hostname

pg64

-bash-4.2$ exit

logout

Connection to pg64 closed.repl复制用户免密

为了允许 repl 用户在不指定密码的情况下进行流复制和在线恢复,并使用 postgres 执行 pg_rewind,我们在 postgres 用户的主目录中创建 .pgpass 文件,并将每个 PostgreSQL 服务器上的权限更改为 600。

[pg62、pg63、pg64]$ vi /var/lib/pgsql/.pgpass

pg62:5432:replication:repl:repl

pg63:5432:replication:repl:repl

pg64:5432:replication:repl:repl

pg62:5432:postgres:postgres:postgres

pg63:5432:postgres:postgres:postgres

pg64:5432:postgres:postgres:postgres

[pg62、pg63、pg64]$ chmod 600 /var/lib/pgsql/.pgpass四、部署pgpool-II

# pg62、pg63、pg64服务器执行操作。

安装依赖包

libmemcached-1.0.16-5.el7.x86_64 <<< 基础包需要安装 mount /dev/cdrom /mnt

yum install -y libmemcached排除pg仓库的pgpool包

# 修改pgdg仓库文件

vi /etc/yum.repos.d/pgdg-redhat-all.repo

[pgdg-common]

...

exclude=pgpool*

[pgdg15]

...

exclude=pgpool*

[pgdg14]

...

exclude=pgpool*

[pgdg13]

...

exclude=pgpool*

[pgdg12]

...

exclude=pgpool*

[pgdg11]

...

exclude=pgpool*

[pgdg10]

...

exclude=pgpool*

#拷贝修改的文件至其它服务器

scp /etc/yum.repos.d/pgdg-redhat-all.repo 192.168.1.63:/etc/yum.repos.d/pgdg-redhat-all.repo

scp /etc/yum.repos.d/pgdg-redhat-all.repo 192.168.1.64:/etc/yum.repos.d/pgdg-redhat-all.repo 安装pgpool-II

[root@pg62 yum.repos.d]# yum install -y https://www.pgpool.net/yum/rpms/4.4/redhat/rhel-7-x86_64/pgpool-II-release-4.4-1.noarch.rpm

Loaded plugins: langpacks, product-id, search-disabled-repos, subscription-manager

This system is not registered with an entitlement server. You can use subscription-manager to register.

pgpool-II-release-4.4-1.noarch.rpm | 5.7 kB 00:00:00

Examining /var/tmp/yum-root-IXf77b/pgpool-II-release-4.4-1.noarch.rpm: pgpool-II-release-4.4-1.noarch

Marking /var/tmp/yum-root-IXf77b/pgpool-II-release-4.4-1.noarch.rpm to be installed

Resolving Dependencies

--> Running transaction check

---> Package pgpool-II-release.noarch 0:4.4-1 will be installed

--> Finished Dependency Resolution

pgdg-common/7Server/x86_64/signature | 665 B 00:00:00

pgdg-common/7Server/x86_64/signature | 2.9 kB 00:00:00 !!!

pgdg12/7Server/x86_64/signature | 665 B 00:00:00

pgdg12/7Server/x86_64/signature | 3.6 kB 00:00:00 !!!

pgdg13/7Server/x86_64/signature | 665 B 00:00:00

pgdg13/7Server/x86_64/signature | 3.6 kB 00:00:00 !!!

pgdg14/7Server/x86_64/signature | 665 B 00:00:00

pgdg14/7Server/x86_64/signature | 3.6 kB 00:00:00 !!!

pgdg15/7Server/x86_64/signature | 665 B 00:00:00

pgdg15/7Server/x86_64/signature | 3.6 kB 00:00:00 !!!

Dependencies Resolved

=============================================================================================================================================================

Package Arch Version Repository Size

=============================================================================================================================================================

Installing:

pgpool-II-release noarch 4.4-1 /pgpool-II-release-4.4-1.noarch 2.9 k

Transaction Summary

=============================================================================================================================================================

Install 1 Package

Total size: 2.9 k

Installed size: 2.9 k

Downloading packages:

Running transaction check

Running transaction test

Transaction test succeeded

Running transaction

Installing : pgpool-II-release-4.4-1.noarch 1/1

Verifying : pgpool-II-release-4.4-1.noarch 1/1

Installed:

pgpool-II-release.noarch 0:4.4-1

Complete!

[root@pg62 yum.repos.d]# 创建pgpool_node_id

从 Pgpool-II 4.2 版本开始,所有主机上的配置参数均相同。如果启用了监视功能,则需要使用 pgpool_node_id 文件来区分不同的主机。需要创建一个 pgpool_node_id 文件,并指定 pgpool(监视)节点编号(例如 0、1、2 等)来标识 pgpool(监视)主机。

pg62: echo 0 > /etc/pgpool-II/pgpool_node_id

pg63: echo 1 > /etc/pgpool-II/pgpool_node_id

pg64: echo 2 > /etc/pgpool-II/pgpool_node_idpgpool.conf配置文件详解

使用 YUM 安装 Pgpool-II 时,Pgpool-II 配置文件 pgpool.conf 会安装在 /etc/pgpool-II 目录下。从 Pgpool-II 4.2 版本开始,所有主机上的配置参数都相同,因此您可以编辑任意 pgpool 节点上的 pgpool.conf 文件,然后将编辑后的 pgpool.conf 文件复制到其他 pgpool 节点。

# /etc/pgpool-II/pgpool.conf 配置文件解析

1、 集群模式

Pgpool-II 具有多种集群模式。可以使用 `backend_clustering_mode` 参数设置集群模式。在本配置示例中,使用的是流复制模式。

backend_clustering_mode = 'streaming_replication'2、监听地址

## 监听地址

为了允许 Pgpool-II 和 PCP 接受所有传入的连接,我们设置 listen_addresses = '*'。

listen_addresses = '*'

pcp_listen_addresses = '*'3、监听端口号

## 监听端口号

指定 Pgpool-II 监听的端口号。

port = 99994、流式复制检查用户

# 在 sr_check_user 和 sr_check_password 中指定复制延迟检查用户和密码。在本例中,我们将 sr_check_password 留空,并在 pool_passwd 中创建相应条目。从 Pgpool-II 4.0 开始,如果这些参数留空,Pgpool-II 将首先尝试从 pool_passwd 文件中获取该特定用户的密码,然后再使用空密码。

sr_check_user = 'pgpool'

sr_check_password = ''5、健康检查

# 启用健康检查,以便 Pgpool-II 执行故障转移。此外,如果网络不稳定,即使后端运行正常,健康检查也可能失败,

此时可能会发生故障转移或降级操作。为了防止出现此类健康检查误检,我们将 health_check_max_retries 设置为 3。

health_check_user 和 health_check_password 的指定方式与 sr_check_user 和 sr_check_password 相同。

health_check_period = 5

health_check_timeout = 30

health_check_user = 'pgpool'

health_check_password = ''

health_check_max_retries = 36、后端设置

# 指定 PostgreSQL 后端信息。可以通过在参数名称末尾添加数字来指定多个后端。

要在 SHOW POOL NODES 命令结果中显示“replication_state”和“replication_sync_state”列,

需要指定 backend_application_name 参数。这里,我们需要在这些参数中指定每个后端的主机名。(Pgpool-II 4.1 或更高版本)

# - Backend Connection Settings -

backend_application_name0 = 'pg62'

backend_hostname0 = 'pg62'

backend_port0 = 5432

backend_weight0 = 1

backend_data_directory0 = '/var/lib/pgsql/15/data'

backend_flag0 = 'ALLOW_TO_FAILOVER'

backend_application_name1 = 'pg63'

backend_hostname1 = 'pg63'

backend_port1 = 5432

backend_weight1 = 1

backend_data_directory1 = '/var/lib/pgsql/15/data'

backend_flag1 = 'ALLOW_TO_FAILOVER'

backend_application_name2 = 'pg64'

backend_hostname2 = 'pg64'

backend_port2 = 5432

backend_weight2 = 1

backend_data_directory2 = '/var/lib/pgsql/15/data'

backend_flag2 = 'ALLOW_TO_FAILOVER'7、故障转移配置

在 failover_command 参数中指定故障转移后要执行的 failover.sh 脚本。如果使用 3 台 PostgreSQL 服务器,则需要指定 follow_primary_command 参数,以便在主节点故障转移后运行该脚本。如果只有两台 PostgreSQL 服务器,则无需设置 follow_primary_command 参数。

注意:当使用 Pgpool-II 4.3 中添加的 switchover 选项执行 pcp_promote_node 切换时,

如果要将以前的主节点自动转换为备用节点,即使有两个 PostgreSQL 服务器,也需要设置 follow_primary_command。

# 从模板拷贝配置文件,所有节点操作

cp -p /etc/pgpool-II/sample_scripts/failover.sh.sample /etc/pgpool-II/failover.sh

cp -p /etc/pgpool-II/sample_scripts/follow_primary.sh.sample /etc/pgpool-II/follow_primary.sh

chown postgres:postgres /etc/pgpool-II/{failover.sh,follow_primary.sh}/etc/pgpool-II/failover.sh

# 检查与配置PGHOME

[all servers]# vi /etc/pgpool-II/failover.sh

...

PGHOME=/usr/pgsql-15

...

-- /etc/pgpool-II/failover.sh文件需要关注的配置项,如有不同需要修改

PGHOME=/usr/pgsql-15

POSTGRESQL_STARTUP_USER=postgres

SSH_KEY_FILE=id_rsa_pgpool/etc/pgpool-II/follow_primary.sh

[all servers]# vi /etc/pgpool-II/follow_primary.sh

...

PGHOME=/usr/pgsql-15

...

-- /etc/pgpool-II/follow_primary.sh文件需要关注的配置项,如有不同需要修改

PGHOME=/usr/pgsql-15

ARCHIVEDIR=/var/lib/pgsql/archivedir

REPLUSER=repl

PCP_USER=pgpool

PGPOOL_PATH=/usr/bin

PCP_PORT=9898

POSTGRESQL_STARTUP_USER=postgres

SSH_KEY_FILE=id_rsa_pgpool

- myrecovery.conf的出处

[root@pg63 bin]# cat /var/lib/pgsql/15/data/postgresql.conf | grep include_if_exists

#include_if_exists = '...' # include file only if it exists

include_if_exists = '/var/lib/pgsql/15/data/myrecovery.conf'8、pcp用户配置

由于在 follow_primary_command 脚本中使用 PCP 命令需要用户身份验证,因此我们需要在 pcp.conf 中以“username:encrypted password”的格式指定用户名和 md5 加密密码。管理pgpool的用户密码,非数据库用户

如果 follow_primary.sh 中的 PCP_USER 指定了 pgpool 用户,

# cat /etc/pgpool-II/follow_primary.sh | grep PCP_USER

...

PCP_USER=pgpool

...

# 所有服务器执行

[root@pg62 ~]# echo 'pgpool:'`pg_md5 Pcppool234` >> /etc/pgpool-II/pcp.conf

[root@pg62 ~]# more /etc/pgpool-II/pcp.conf

# PCP Client Authentication Configuration File

# ============================================

#

# This file contains user ID and his password for pgpool

# communication manager authentication.

#

# Note that users defined here do not need to be PostgreSQL

# users. These users are authorized ONLY for pgpool

# communication manager.

#

# File Format

# ===========

#

# List one UserID and password on a single line. They must

# be concatenated together using ':' (colon) between them.

# No spaces or tabs are allowed anywhere in the line.

#

# Example:

# postgres:e8a48653851e28c69d0506508fb27fc5

#

# Be aware that there will be no spaces or tabs at the

# beginning of the line! although the above example looks

# like so.

#

# Lines beginning with '#' (pound) are comments and will

# be ignored. Again, no spaces or tabs allowed before '#'.

# USERID:MD5PASSWD

pgpool:597c2b239d46116284f274ef66e22df59、.pcppass 文件配置

由于 follow_primary.sh 脚本必须在不输入密码的情况下执行 PCP 命令,因此我们需要在每台服务器上的 Pgpool-II 启动用户(postgres 用户)的主目录中创建 .pcppass 文件。

pg62/pg63/pg64

su - postgres

echo 'localhost:9898:pgpool:Pcppool234' > ~/.pcppass

chmod 600 ~/.pcppass

[root@pg62 ~]# su - postgres

Last login: Thu Dec 11 11:13:37 CST 2025 on pts/1

-bash-4.2$ echo 'localhost:9898:pgpool:Pcppool234' > ~/.pcppass

-bash-4.2$ chmod 600 ~/.pcppass

-bash-4.2$ more ~/.pcppass

localhost:9898:pgpool:Pcppool234

注意:follow_primary.sh 脚本不支持表空间。如果您使用表空间,则需要修改该脚本以支持表空间。10、Pgpool-II 在线恢复配置

接下来,为了使用 Pgpool-II 执行在线恢复,我们需要指定 PostgreSQL 用户名和在线恢复命令 recovery_1st_stage。由于执行在线恢复需要 PostgreSQL 的超级用户权限,因此我们在 recovery_user 中指定 postgres 用户。然后,我们在 PostgreSQL 主服务器(pg62)的数据库集群目录中创建 recovery_1st_stage 和 pgpool_remote_start 两个文件,并添加执行权限。

# pg62执行

recovery_user = 'postgres'

recovery_password = ''

recovery_1st_stage_command = 'recovery_1st_stage'

cp -p /etc/pgpool-II/sample_scripts/recovery_1st_stage.sample /var/lib/pgsql/15/data/recovery_1st_stage

cp -p /etc/pgpool-II/sample_scripts/pgpool_remote_start.sample /var/lib/pgsql/15/data/pgpool_remote_start

chown postgres:postgres /var/lib/pgsql/15/data/{recovery_1st_stage,pgpool_remote_start}

基本上,只要根据 PostgreSQL 安装目录更改 PGHOME,应该就能正常工作。

[server1]# vi /var/lib/pgsql/15/data/recovery_1st_stage

...

PGHOME=/usr/pgsql-15

...

- 需要关注的参数

PGHOME=/usr/pgsql-15

ARCHIVEDIR=/var/lib/pgsql/archivedir

REPLUSER=repl

POSTGRESQL_STARTUP_USER=postgres

SSH_KEY_FILE=id_rsa_pgpool

[pg62]# vi /var/lib/pgsql/15/data/pgpool_remote_start

...

PGHOME=/usr/pgsql-15

...

- 需要关注的参数

PGHOME=/usr/pgsql-15

POSTGRESQL_STARTUP_USER=postgres

SSH_KEY_FILE=id_rsa_pgpool

# 为了使用在线恢复功能,需要 pgpool_recovery、pgpool_remote_start、pgpool_switch_xlog 等函数,因此我们需要在 PostgreSQL 服务器 server1 的 template1 上安装 pgpool_recovery。

[pg62]# su - postgres

[pg62]$ psql template1 -c "CREATE EXTENSION pgpool_recovery"

-- 过程

-bash-4.2$ psql template1 -c "CREATE EXTENSION pgpool_recovery"

CREATE EXTENSION

-bash-4.2$

注意:recovery_1st_stage 脚本不支持表空间。如果您使用表空间,则需要修改脚本以支持表空间。11、客户端身份验证配置

由于在“开始之前”部分我们已经将 PostgreSQL 的身份验证方法设置为 scram-sha-256,

因此需要通过 Pgpool-II 设置客户端身份验证才能连接到后端节点。

使用 RPM 安装时,Pgpool-II 的配置文件 pool_hba.conf 位于 /etc/pgpool-II 目录下。

默认情况下,pool_hba 身份验证处于禁用状态,需要设置 enable_pool_hba = on 才能启用。

vi /etc/pgpool-II/pgpool.conf

enable_pool_hba = on默认的身份验证密码文件名是 pool_passwd。要使用 scram-sha-256 身份验证,需要解密密钥来解密密码。我们在 Pgpool-II 启动用户 postgres(Pgpool-II 4.1 或更高版本)的主目录中创建 .pgpoolkey 文件。(Pgpool-II 4.0 或更早版本默认以 root 用户身份启动。)

pool_hba.conf 文件的格式与 PostgreSQL 的 pg_hba.conf 格式非常接近。将 pgpool 和 postgres 用户的身份验证方法设置为 scram-sha-256。

host all pgpool 0.0.0.0/0 scram-sha-256

host all postgres 0.0.0.0/0 scram-sha-256

# 命令

[pg62、pg63、pg64]# su - postgres

[pg62、pg63、pg64]$ echo 'Pgpool_key' > ~/.pgpoolkey

[pg62、pg63、pg64]$ chmod 600 ~/.pgpoolkey

-- 过程

-bash-4.2$ echo 'Pgpool_key' > ~/.pgpoolkey

-bash-4.2$ chmod 600 ~/.pgpoolkey

-bash-4.2$

执行命令 `pg_enc -m -k /path/to/.pgpoolkey -u username -p` 将用户名和 AES 加密密码注册到文件 `pool_passwd` 中。

如果 `pool_passwd` 文件尚不存在,则会在与 `pgpool.conf` 相同的目录下创建它。

# 命令

[pg62、pg63、pg64]# su - postgres

[pg62、pg63、pg64]$ pg_enc -m -k ~/.pgpoolkey -u pgpool -p

db password: [pgpool user's password]

[pg62、pg63、pg64]$ pg_enc -m -k ~/.pgpoolkey -u postgres -p

db password: [postgres user's password]

-- 过程

-bash-4.2$ pg_enc -m -k ~/.pgpoolkey -u pgpool -p

db password:

trying to read key from file /var/lib/pgsql/.pgpoolkey

-bash-4.2$

-bash-4.2$ pg_enc -m -k ~/.pgpoolkey -u postgres -p

db password:

trying to read key from file /var/lib/pgsql/.pgpoolkey

-bash-4.2$

-bash-4.2$ more /var/lib/pgsql/.pgpoolkey

Pgpool_key

-bash-4.2$ cat /etc/pgpool-II/pool_passwd

pgpool:AES2fO8b/EL17nuQKMibn/26A==

postgres:AESrJk+gGIl4VVgRcpbmT9mzQ==

-bash-4.2$ 12、看门狗配置

在pg62/pg63/pg64上启用看门狗功能

vi /etc/pgpool-II/pgpool.conf

use_watchdog = on

指定一个虚拟 IP 地址,该地址接受来自pg62/pg63/pg64上的客户端的连接。确保设置为虚拟 IP 的 IP 地址尚未被使用。

delegate_ip = '192.168.1.65'要启动/关闭虚拟 IP 并发送 ARP 请求,我们需要设置 if_up_cmd、if_down_cmd 和 arping_cmd。

本例中使用的网络接口是“enp0s8”。由于执行 if_up/down_cmd 或 arping_cmd 命令需要 root 权限,因此请使用 setuid 参数,或者允许 Pgpool-II 启动用户、postgres 用户(Pgpool-II 4.1 或更高版本)在无需密码的情况下运行 sudo 命令。

注意:如果 Pgpool-II 是使用 RPM 安装的,则 postgres 用户已配置为通过 sudo 运行 ip/arping 命令而无需密码。

所有服务器检查配置文件 /etc/sudoers

postgres ALL=NOPASSWD: /sbin/ip

postgres ALL=NOPASSWD: /usr/sbin/arping

yum安装的sudo配置在以下文件:

[root@pg62 sudoers.d]# more /etc/sudoers.d/pgpool

postgres ALL=NOPASSWD: /sbin/ip

postgres ALL=NOPASSWD: /usr/sbin/arping

#我们配置以下参数以使用 sudo 运行 if_up/down_cmd 或 arping_cmd。

vi /etc/pgpool-II/pgpool.conf

if_up_cmd = '/usr/bin/sudo /sbin/ip addr add $_IP_$/24 dev ens33 label ens33:0'

if_down_cmd = '/usr/bin/sudo /sbin/ip addr del $_IP_$/24 dev ens33'

arping_cmd = '/usr/bin/sudo /usr/sbin/arping -U $_IP_$ -w 1 -I ens33'

#请提供所有用于配置看门狗的 Pgpool-II 节点信息。

hostname0 = 'pg62'

wd_port0 = 9000

pgpool_port0 = 9999

hostname1 = 'pg63'

wd_port1 = 9000

pgpool_port1 = 9999

hostname2 = 'pg64'

wd_port2 = 9000

pgpool_port2 = 9999

#指定生命检查方法 wd_lifecheck_method 和生命检查间隔 wd_interval。这里,我们使用心跳方式执行看门狗生命检查。

wd_lifecheck_method = 'heartbeat'

wd_interval = 10

#指定所有用于发送和接收心跳信号的 Pgpool-II 节点信息。

heartbeat_hostname0 = 'pg62'

heartbeat_port0 = 9694

heartbeat_device0 = ''

heartbeat_hostname1 = 'pg63'

heartbeat_port1 = 9694

heartbeat_device1 = ''

heartbeat_hostname2 = 'pg64'

heartbeat_port2 = 9694

heartbeat_device2 = ''

#如果 wd_lifecheck_method 设置为 heartbeat,则指定检测故障的时间 wd_heartbeat_deadtime 和发送心跳信号的间隔 wd_heartbeat_deadtime。

wd_heartbeat_keepalive = 2

wd_heartbeat_deadtime = 30

#当 Watchdog 进程异常终止时,虚拟 IP 可能在新旧两个活动 pgpool 节点上都处于“启用”状态。

为防止这种情况,请配置 wd_escalation_command 命令,

在新的活动 pgpool 节点上启用虚拟 IP 之前,先将其他 pgpool 节点上的虚拟 IP 关闭。

wd_escalation_command = '/etc/pgpool-II/escalation.sh'/etc/pgpool-II/escalation.sh配置文件

[pg62,pg63,pg64]# vi /etc/pgpool-II/escalation.sh

...

PGPOOLS=(server1 server2 server3)

VIP=192.168.1.65

DEVICE=ens33

...

[root@pg62 sudoers.d]# more /etc/pgpool-II/escalation.sh

#!/bin/bash

# This script is run by wd_escalation_command to bring down the virtual IP on o

ther pgpool nodes

# before bringing up the virtual IP on the new active pgpool node.

set -o xtrace

POSTGRESQL_STARTUP_USER=postgres

SSH_KEY_FILE=id_rsa_pgpool

SSH_OPTIONS="-o StrictHostKeyChecking=no -o UserKnownHostsFile=/dev/null -i ~/.

ssh/${SSH_KEY_FILE}"

SSH_TIMEOUT=5

PGPOOLS=(pg62 pg63 pg64)

VIP=192.168.1.65

DEVICE=ens33

for pgpool in "${PGPOOLS[@]}"; do

[ "$HOSTNAME" = "${pgpool}" ] && continue

timeout ${SSH_TIMEOUT} ssh -T ${SSH_OPTIONS} ${POSTGRESQL_STARTUP_USER}@${p

gpool} "

/usr/bin/sudo /sbin/ip addr del ${VIP}/24 dev ${DEVICE}

"

if [ $? -ne 0 ]; then

echo ERROR: escalation.sh: failed to release VIP on ${pgpool}.

fi

done

exit 0

[root@pg62 sudoers.d]#注意:如果您有偶数个看门狗节点,则需要启用 enable_consensus_with_half_votes 参数。

注意:如果启用了 use_watchdog,请确保在 pgpool_node_id 文件中指定了 pgpool 节点编号。

13、日志配置

## 记录日志

vi /etc/pgpool-II/pgpool.conf

log_destination = 'stderr'

logging_collector = on

log_directory = '/var/log/pgpool_log'

log_filename = 'pgpool-%Y-%m-%d_%H%M%S.log'

log_truncate_on_rotation = on

log_rotation_age = 1d

log_rotation_size = 10MB

- 创建日志目录

[pg62,pg63,pg64]# mkdir /var/log/pgpool_log/

[pg62,pg63,pg64]# chown postgres:postgres /var/log/pgpool_log/

[pg62]# scp -p /etc/pgpool-II/pgpool.conf root@pg63:/etc/pgpool-II/pgpool.conf

[pg62]# scp -p /etc/pgpool-II/pgpool.conf root@pg64:/etc/pgpool-II/pgpool.confpgpool.conf配置文件示例

[root@pg62 log]# cat /etc/pgpool-II/pgpool.conf

# ----------------------------

# pgPool-II configuration file

# ----------------------------

#

# This file consists of lines of the form:

#

# name = value

#

# Whitespace may be used. Comments are introduced with "#" anywhere on a line.

# The complete list of parameter names and allowed values can be found in the

# pgPool-II documentation.

#

# This file is read on server startup and when the server receives a SIGHUP

# signal. If you edit the file on a running system, you have to SIGHUP the

# server for the changes to take effect, or use "pgpool reload". Some

# parameters, which are marked below, require a server shutdown and restart to

# take effect.

#

#------------------------------------------------------------------------------

# BACKEND CLUSTERING MODE

# Choose one of: 'streaming_replication', 'native_replication',

# 'logical_replication', 'slony', 'raw' or 'snapshot_isolation'

# (change requires restart)

#------------------------------------------------------------------------------

backend_clustering_mode = 'streaming_replication'

#------------------------------------------------------------------------------

# CONNECTIONS

#------------------------------------------------------------------------------

# - pgpool Connection Settings -

listen_addresses = '*'

# what host name(s) or IP address(es) to listen on;

# comma-separated list of addresses;

# defaults to 'localhost'; use '*' for all

# (change requires restart)

port = 9999

# Port number

# (change requires restart)

unix_socket_directories = '/var/run/postgresql'

# Unix domain socket path(s)

# The Debian package defaults to

# /var/run/postgresql

# (change requires restart)

#unix_socket_group = ''

# The Owner group of Unix domain socket(s)

# (change requires restart)

#unix_socket_permissions = 0777

# Permissions of Unix domain socket(s)

# (change requires restart)

#reserved_connections = 0

# Number of reserved connections.

# Pgpool-II does not accept connections if over

# num_init_children - reserved_connections.

# - pgpool Communication Manager Connection Settings -

pcp_listen_addresses = '*'

# what host name(s) or IP address(es) for pcp process to listen on;

# comma-separated list of addresses;

# defaults to 'localhost'; use '*' for all

# (change requires restart)

pcp_port = 9898

# Port number for pcp

# (change requires restart)

pcp_socket_dir = '/var/run/postgresql'

# Unix domain socket path for pcp

# The Debian package defaults to

# /var/run/postgresql

# (change requires restart)

#listen_backlog_multiplier = 2

# Set the backlog parameter of listen(2) to

# num_init_children * listen_backlog_multiplier.

# (change requires restart)

#serialize_accept = off

# whether to serialize accept() call to avoid thundering herd problem

# (change requires restart)

# - Backend Connection Settings -

#backend_hostname0 = 'host1'

# Host name or IP address to connect to for backend 0

#backend_port0 = 5432

# Port number for backend 0

#backend_weight0 = 1

# Weight for backend 0 (only in load balancing mode)

#backend_data_directory0 = '/data'

# Data directory for backend 0

#backend_flag0 = 'ALLOW_TO_FAILOVER'

# Controls various backend behavior

# ALLOW_TO_FAILOVER, DISALLOW_TO_FAILOVER

# or ALWAYS_PRIMARY

#backend_application_name0 = 'server0'

# walsender's application_name, used for "show pool_nodes" command

#backend_hostname1 = 'host2'

#backend_port1 = 5433

#backend_weight1 = 1

#backend_data_directory1 = '/data1'

#backend_flag1 = 'ALLOW_TO_FAILOVER'

#backend_application_name1 = 'server1'

backend_application_name0 = 'pg62'

backend_hostname0 = 'pg62'

backend_port0 = 5432

backend_weight0 = 1

backend_data_directory0 = '/var/lib/pgsql/15/data'

backend_flag0 = 'ALLOW_TO_FAILOVER'

backend_application_name1 = 'pg63'

backend_hostname1 = 'pg63'

backend_port1 = 5432

backend_weight1 = 1

backend_data_directory1 = '/var/lib/pgsql/15/data'

backend_flag1 = 'ALLOW_TO_FAILOVER'

backend_application_name2 = 'pg64'

backend_hostname2 = 'pg64'

backend_port2 = 5432

backend_weight2 = 1

backend_data_directory2 = '/var/lib/pgsql/15/data'

backend_flag2 = 'ALLOW_TO_FAILOVER'

# - Authentication -

enable_pool_hba = on

# Use pool_hba.conf for client authentication

#pool_passwd = 'pool_passwd'

# File name of pool_passwd for md5 authentication.

# "" disables pool_passwd.

# (change requires restart)

#authentication_timeout = 1min

# Delay in seconds to complete client authentication

# 0 means no timeout.

#allow_clear_text_frontend_auth = off

# Allow Pgpool-II to use clear text password authentication

# with clients, when pool_passwd does not

# contain the user password

# - SSL Connections -

#ssl = off

# Enable SSL support

# (change requires restart)

#ssl_key = 'server.key'

# SSL private key file

# (change requires restart)

#ssl_cert = 'server.crt'

# SSL public certificate file

# (change requires restart)

#ssl_ca_cert = ''

# Single PEM format file containing

# CA root certificate(s)

# (change requires restart)

#ssl_ca_cert_dir = ''

# Directory containing CA root certificate(s)

# (change requires restart)

#ssl_crl_file = ''

# SSL certificate revocation list file

# (change requires restart)

#ssl_ciphers = 'HIGH:MEDIUM:+3DES:!aNULL'

# Allowed SSL ciphers

# (change requires restart)

#ssl_prefer_server_ciphers = off

# Use server's SSL cipher preferences,

# rather than the client's

# (change requires restart)

#ssl_ecdh_curve = 'prime256v1'

# Name of the curve to use in ECDH key exchange

#ssl_dh_params_file = ''

# Name of the file containing Diffie-Hellman parameters used

# for so-called ephemeral DH family of SSL cipher.

#ssl_passphrase_command=''

# Sets an external command to be invoked when a passphrase

# for decrypting an SSL file needs to be obtained

# (change requires restart)

#------------------------------------------------------------------------------

# POOLS

#------------------------------------------------------------------------------

# - Concurrent session and pool size -

#process_management_mode = static

# process management mode for child processes

# Valid options:

# static: all children are pre-forked at startup

# dynamic: child processes are spawned on demand.

# number of idle child processes at any time are

# configured by min_spare_children and max_spare_children

#process_management_strategy = gentle

# process management strategy to satisfy spare processes

# Valid options:

#

# lazy: In this mode, the scale-down is performed gradually

# and only gets triggered when excessive spare processes count

# remains high for more than 5 mins

#

# gentle: In this mode, the scale-down is performed gradually

# and only gets triggered when excessive spare processes count

# remains high for more than 2 mins

#

# aggressive: In this mode, the scale-down is performed aggressively

# and gets triggered more frequently in case of higher spare processes.

# This mode uses faster and slightly less smart process selection criteria

# to identify the child processes that can be serviced to satisfy

# max_spare_children

#

# (Only applicable for dynamic process management mode)

#num_init_children = 32

# Maximum Number of concurrent sessions allowed

# (change requires restart)

#min_spare_children = 5

# Minimum number of spare child processes waiting for connection

# (Only applicable for dynamic process management mode)

#max_spare_children = 10

# Maximum number of idle child processes waiting for connection

# (Only applicable for dynamic process management mode)

#max_pool = 4

# Number of connection pool caches per connection

# (change requires restart)

# - Life time -

#child_life_time = 5min

# Pool exits after being idle for this many seconds

#child_max_connections = 0

# Pool exits after receiving that many connections

# 0 means no exit

#connection_life_time = 0

# Connection to backend closes after being idle for this many seconds

# 0 means no close

#client_idle_limit = 0

# Client is disconnected after being idle for that many seconds

# (even inside an explicit transactions!)

# 0 means no disconnection

#------------------------------------------------------------------------------

# LOGS

#------------------------------------------------------------------------------

# - Where to log -

log_destination = 'stderr'

# Where to log

# Valid values are combinations of stderr,

# and syslog. Default to stderr.

# - What to log -

#log_line_prefix = '%m: %a pid %p: ' # printf-style string to output at beginning of each log line.

#log_connections = off

# Log connections

#log_disconnections = off

# Log disconnections

#log_hostname = off

# Hostname will be shown in ps status

# and in logs if connections are logged

log_statement = all

# Log all statements

log_per_node_statement = on

# Log all statements

# with node and backend informations

#log_client_messages = off

# Log any client messages

#log_standby_delay = 'if_over_threshold'

# Log standby delay

# Valid values are combinations of always,

# if_over_threshold, none

# - Syslog specific -

#syslog_facility = 'LOCAL0'

# Syslog local facility. Default to LOCAL0

#syslog_ident = 'pgpool'

# Syslog program identification string

# Default to 'pgpool'

# - Debug -

#log_error_verbosity = default # terse, default, or verbose messages

client_min_messages = log # values in order of decreasing detail:

# debug5

# debug4

# debug3

# debug2

# debug1

# log

# notice

# warning

# error

log_min_messages = info # values in order of decreasing detail:

# debug5

# debug4

# debug3

# debug2

# debug1

# info

# notice

# warning

# error

# log

# fatal

# panic

# This is used when logging to stderr:

logging_collector = on

# Enable capturing of stderr

# into log files.

# (change requires restart)

# -- Only used if logging_collector is on ---

log_directory = '/var/log/pgpool_log'

# directory where log files are written,

# can be absolute

log_filename = 'pgpool-%Y-%m-%d_%H%M%S.log'

# log file name pattern,

# can include strftime() escapes

#log_file_mode = 0600

# creation mode for log files,

# begin with 0 to use octal notation

log_truncate_on_rotation = on

# If on, an existing log file with the

# same name as the new log file will be

# truncated rather than appended to.

# But such truncation only occurs on

# time-driven rotation, not on restarts

# or size-driven rotation. Default is

# off, meaning append to existing files

# in all cases.

log_rotation_age = 1d

# Automatic rotation of logfiles will

# happen after that (minutes)time.

# 0 disables time based rotation.

log_rotation_size = 10MB

# Automatic rotation of logfiles will

# happen after that much (KB) log output.

# 0 disables size based rotation.

#------------------------------------------------------------------------------

# FILE LOCATIONS

#------------------------------------------------------------------------------

#pid_file_name = '/var/run/pgpool/pgpool.pid'

# PID file name

# Can be specified as relative to the"

# location of pgpool.conf file or

# as an absolute path

# (change requires restart)

#logdir = '/tmp'

# Directory of pgPool status file

# (change requires restart)

#------------------------------------------------------------------------------

# CONNECTION POOLING

#------------------------------------------------------------------------------

#connection_cache = on

# Activate connection pools

# (change requires restart)

# Semicolon separated list of queries

# to be issued at the end of a session

# The default is for 8.3 and later

#reset_query_list = 'ABORT; DISCARD ALL'

# The following one is for 8.2 and before

#reset_query_list = 'ABORT; RESET ALL; SET SESSION AUTHORIZATION DEFAULT'

#------------------------------------------------------------------------------

# REPLICATION MODE

#------------------------------------------------------------------------------

#replicate_select = off

# Replicate SELECT statements

# when in replication mode

# replicate_select is higher priority than

# load_balance_mode.

#insert_lock = on

# Automatically locks a dummy row or a table

# with INSERT statements to keep SERIAL data

# consistency

# Without SERIAL, no lock will be issued

#lobj_lock_table = ''

# When rewriting lo_creat command in

# replication mode, specify table name to

# lock

# - Degenerate handling -

#replication_stop_on_mismatch = off

# On disagreement with the packet kind

# sent from backend, degenerate the node

# which is most likely "minority"

# If off, just force to exit this session

#failover_if_affected_tuples_mismatch = off

# On disagreement with the number of affected

# tuples in UPDATE/DELETE queries, then

# degenerate the node which is most likely

# "minority".

# If off, just abort the transaction to

# keep the consistency

#------------------------------------------------------------------------------

# LOAD BALANCING MODE

#------------------------------------------------------------------------------

load_balance_mode = on

# Activate load balancing mode

# (change requires restart)

ignore_leading_white_space = on

# Ignore leading white spaces of each query

#read_only_function_list = ''

# Comma separated list of function names

# that don't write to database

# Regexp are accepted

#write_function_list = ''

# Comma separated list of function names

# that write to database

# Regexp are accepted

# If both read_only_function_list and write_function_list

# is empty, function's volatile property is checked.

# If it's volatile, the function is regarded as a

# writing function.

#primary_routing_query_pattern_list = ''

# Semicolon separated list of query patterns

# that should be sent to primary node

# Regexp are accepted

# valid for streaming replication mode only.

#database_redirect_preference_list = ''

# comma separated list of pairs of database and node id.

# example: postgres:primary,mydb[0-4]:1,mydb[5-9]:2'

# valid for streaming replication mode only.

#app_name_redirect_preference_list = ''

# comma separated list of pairs of app name and node id.

# example: 'psql:primary,myapp[0-4]:1,myapp[5-9]:standby'

# valid for streaming replication mode only.

#allow_sql_comments = off

# if on, ignore SQL comments when judging if load balance or

# query cache is possible.

# If off, SQL comments effectively prevent the judgment

# (pre 3.4 behavior).

#disable_load_balance_on_write = 'transaction'

# Load balance behavior when write query is issued

# in an explicit transaction.

#

# Valid values:

#

# 'transaction' (default):

# if a write query is issued, subsequent

# read queries will not be load balanced

# until the transaction ends.

#

# 'trans_transaction':

# if a write query is issued, subsequent

# read queries in an explicit transaction

# will not be load balanced until the session ends.

#

# 'dml_adaptive':

# Queries on the tables that have already been

# modified within the current explicit transaction will

# not be load balanced until the end of the transaction.

#

# 'always':

# if a write query is issued, read queries will

# not be load balanced until the session ends.

#

# Note that any query not in an explicit transaction

# is not affected by the parameter except 'always'.

#dml_adaptive_object_relationship_list= ''

# comma separated list of object pairs

# [object]:[dependent-object], to disable load balancing

# of dependent objects within the explicit transaction

# after WRITE statement is issued on (depending-on) object.

#

# example: 'tb_t1:tb_t2,insert_tb_f_func():tb_f,tb_v:my_view'

# Note: function name in this list must also be present in

# the write_function_list

# only valid for disable_load_balance_on_write = 'dml_adaptive'.

#statement_level_load_balance = off

# Enables statement level load balancing

#------------------------------------------------------------------------------

# STREAMING REPLICATION MODE

#------------------------------------------------------------------------------

# - Streaming -

#sr_check_period = 10

# Streaming replication check period

# Default is 10s.

sr_check_user = 'pgpool'

# Streaming replication check user

# This is necessary even if you disable streaming

# replication delay check by sr_check_period = 0

sr_check_password = ''

# Password for streaming replication check user

# Leaving it empty will make Pgpool-II to first look for the

# Password in pool_passwd file before using the empty password

#sr_check_database = 'postgres'

# Database name for streaming replication check

#delay_threshold = 0

# Threshold before not dispatching query to standby node

# Unit is in bytes

# Disabled (0) by default

#delay_threshold_by_time = 0

# Threshold before not dispatching query to standby node

# Unit is in second(s)

# Disabled (0) by default

#prefer_lower_delay_standby = off

# If delay_threshold is set larger than 0, Pgpool-II send to

# the primary when selected node is delayed over delay_threshold.

# If this is set to on, Pgpool-II send query to other standby

# delayed lower.

# - Special commands -

#follow_primary_command = ''

# Executes this command after main node failover

# Special values:

# %d = failed node id

# %h = failed node host name

# %p = failed node port number

# %D = failed node database cluster path

# %m = new main node id

# %H = new main node hostname

# %M = old main node id

# %P = old primary node id

# %r = new main port number

# %R = new main database cluster path

# %N = old primary node hostname

# %S = old primary node port number

# %% = '%' character

#------------------------------------------------------------------------------

# HEALTH CHECK GLOBAL PARAMETERS

#------------------------------------------------------------------------------

health_check_period = 5

# Health check period

# Disabled (0) by default

health_check_timeout = 30

# Health check timeout

# 0 means no timeout

health_check_user = 'pgpool'

# Health check user

health_check_password = ''

# Password for health check user

# Leaving it empty will make Pgpool-II to first look for the

# Password in pool_passwd file before using the empty password

#health_check_database = ''

# Database name for health check. If '', tries 'postgres' frist,

health_check_max_retries = 3

# Maximum number of times to retry a failed health check before giving up.

#health_check_retry_delay = 1

# Amount of time to wait (in seconds) between retries.

#connect_timeout = 10000

# Timeout value in milliseconds before giving up to connect to backend.

# Default is 10000 ms (10 second). Flaky network user may want to increase

# the value. 0 means no timeout.

# Note that this value is not only used for health check,

# but also for ordinary connection to backend.

#------------------------------------------------------------------------------

# HEALTH CHECK PER NODE PARAMETERS (OPTIONAL)

#------------------------------------------------------------------------------

#health_check_period0 = 0

#health_check_timeout0 = 20

#health_check_user0 = 'nobody'

#health_check_password0 = ''

#health_check_database0 = ''

#health_check_max_retries0 = 0

#health_check_retry_delay0 = 1

#connect_timeout0 = 10000

#------------------------------------------------------------------------------

# FAILOVER AND FAILBACK

#------------------------------------------------------------------------------

#failover_command = ''

# Executes this command at failover

# Special values:

# %d = failed node id

# %h = failed node host name

# %p = failed node port number

# %D = failed node database cluster path

# %m = new main node id

# %H = new main node hostname

# %M = old main node id

# %P = old primary node id

# %r = new main port number

# %R = new main database cluster path

# %N = old primary node hostname

# %S = old primary node port number

# %% = '%' character

#failback_command = ''

# Executes this command at failback.

# Special values:

# %d = failed node id

# %h = failed node host name

# %p = failed node port number

# %D = failed node database cluster path

# %m = new main node id

# %H = new main node hostname

# %M = old main node id

# %P = old primary node id

# %r = new main port number

# %R = new main database cluster path

# %N = old primary node hostname

# %S = old primary node port number

# %% = '%' character

#failover_on_backend_error = on

# Initiates failover when reading/writing to the

# backend communication socket fails

# If set to off, pgpool will report an

# error and disconnect the session.

#failover_on_backend_shutdown = off

# Initiates failover when backend is shutdown,

# or backend process is killed.

# If set to off, pgpool will report an

# error and disconnect the session.

#detach_false_primary = off

# Detach false primary if on. Only

# valid in streaming replication

# mode and with PostgreSQL 9.6 or

# after.

#search_primary_node_timeout = 5min

# Timeout in seconds to search for the

# primary node when a failover occurs.

# 0 means no timeout, keep searching

# for a primary node forever.

failover_command = '/etc/pgpool-II/failover.sh %d %h %p %D %m %H %M %P %r %R %N %S'

follow_primary_command = '/etc/pgpool-II/follow_primary.sh %d %h %p %D %m %H %M %P %r %R'

#------------------------------------------------------------------------------

# ONLINE RECOVERY

#------------------------------------------------------------------------------

recovery_user = 'postgres'

# Online recovery user

recovery_password = ''

# Online recovery password

# Leaving it empty will make Pgpool-II to first look for the

# Password in pool_passwd file before using the empty password

recovery_1st_stage_command = 'recovery_1st_stage'

# Executes a command in first stage

#recovery_2nd_stage_command = ''

# Executes a command in second stage

#recovery_timeout = 90

# Timeout in seconds to wait for the

# recovering node's postmaster to start up

# 0 means no wait

#client_idle_limit_in_recovery = 0

# Client is disconnected after being idle

# for that many seconds in the second stage

# of online recovery

# 0 means no disconnection

# -1 means immediate disconnection

#auto_failback = off

# Detached backend node reattach automatically

# if replication_state is 'streaming'.

#auto_failback_interval = 1min

# Min interval of executing auto_failback in

# seconds.

#------------------------------------------------------------------------------

# WATCHDOG

#------------------------------------------------------------------------------

# - Enabling -

use_watchdog = on

# Activates watchdog

# (change requires restart)

# -Connection to upstream servers -

#trusted_servers = ''

# trusted server list which are used

# to confirm network connection

# (hostA,hostB,hostC,...)

# (change requires restart)

#trusted_server_command = 'ping -q -c3 %h'

# Command to execute when communicate trusted server.

# Special values:

# %h = host name specified by trusted_servers

# - Watchdog communication Settings -

#hostname0 = ''

# Host name or IP address of pgpool node

# for watchdog connection

# (change requires restart)

#wd_port0 = 9000

# Port number for watchdog service

# (change requires restart)

#pgpool_port0 = 9999

# Port number for pgpool

# (change requires restart)

#hostname1 = ''

#wd_port1 = 9000

#pgpool_port1 = 9999

#hostname2 = ''

#wd_port2 = 9000

#pgpool_port2 = 9999

hostname0 = 'pg62'

wd_port0 = 9000

pgpool_port0 = 9999

hostname1 = 'pg63'

wd_port1 = 9000

pgpool_port1 = 9999

hostname2 = 'pg64'

wd_port2 = 9000

pgpool_port2 = 9999

#wd_priority = 1

# priority of this watchdog in leader election

# (change requires restart)

#wd_authkey = ''

# Authentication key for watchdog communication

# (change requires restart)

wd_ipc_socket_dir = '/var/run/postgresql'

# Unix domain socket path for watchdog IPC socket

# The Debian package defaults to

# /var/run/postgresql

# (change requires restart)

# - Virtual IP control Setting -

delegate_ip = '192.168.1.65'

# delegate IP address

# If this is empty, virtual IP never bring up.

# (change requires restart)

#if_cmd_path = '/sbin'

# path to the directory where if_up/down_cmd exists

# If if_up/down_cmd starts with "/", if_cmd_path will be ignored.

# (change requires restart)

#if_up_cmd = '/usr/bin/sudo /sbin/ip addr add $_IP_$/24 dev eth0 label eth0:0'

# startup delegate IP command

# (change requires restart)

#if_down_cmd = '/usr/bin/sudo /sbin/ip addr del $_IP_$/24 dev eth0'

# shutdown delegate IP command

# (change requires restart)

#arping_path = '/usr/sbin'

# arping command path

# If arping_cmd starts with "/", if_cmd_path will be ignored.

# (change requires restart)

#arping_cmd = '/usr/bin/sudo /usr/sbin/arping -U $_IP_$ -w 1 -I eth0'

# arping command

# (change requires restart)

if_up_cmd = '/usr/bin/sudo /sbin/ip addr add $_IP_$/24 dev ens33 label ens33:0'

if_down_cmd = '/usr/bin/sudo /sbin/ip addr del $_IP_$/24 dev ens33'

arping_cmd = '/usr/bin/sudo /usr/sbin/arping -U $_IP_$ -w 1 -I ens33'

#ping_path = '/bin'

# ping command path

# (change requires restart)

# - Behaivor on escalation Setting -

#clear_memqcache_on_escalation = on

# Clear all the query cache on shared memory

# when standby pgpool escalate to active pgpool

# (= virtual IP holder).

# This should be off if client connects to pgpool

# not using virtual IP.

# (change requires restart)

#wd_escalation_command = ''

# Executes this command at escalation on new active pgpool.

# (change requires restart)

wd_escalation_command = '/etc/pgpool-II/escalation.sh'

#wd_de_escalation_command = ''

# Executes this command when leader pgpool resigns from being leader.

# (change requires restart)

# - Watchdog consensus settings for failover -

#failover_when_quorum_exists = on

# Only perform backend node failover

# when the watchdog cluster holds the quorum

# (change requires restart)

#failover_require_consensus = on

# Perform failover when majority of Pgpool-II nodes

# aggrees on the backend node status change

# (change requires restart)

#allow_multiple_failover_requests_from_node = off

# A Pgpool-II node can cast multiple votes

# for building the consensus on failover

# (change requires restart)

#enable_consensus_with_half_votes = off

# apply majority rule for consensus and quorum computation

# at 50% of votes in a cluster with even number of nodes.

# when enabled the existence of quorum and consensus

# on failover is resolved after receiving half of the

# total votes in the cluster, otherwise both these

# decisions require at least one more vote than

# half of the total votes.

# (change requires restart)

# - Watchdog cluster membership settings for quorum computation -

#wd_remove_shutdown_nodes = off

# when enabled cluster membership of properly shutdown

# watchdog nodes gets revoked, After that the node does

# not count towards the quorum and consensus computations

#wd_lost_node_removal_timeout = 0s

# Timeout after which the cluster membership of LOST watchdog

# nodes gets revoked. After that the node node does not

# count towards the quorum and consensus computations

# setting timeout to 0 will never revoke the membership

# of LOST nodes

#wd_no_show_node_removal_timeout = 0s

# Time to wait for Watchdog node to connect to the cluster.

# After that time the cluster membership of NO-SHOW node gets

# revoked and it does not count towards the quorum and

# consensus computations

# setting timeout to 0 will not revoke the membership

# of NO-SHOW nodes

# - Lifecheck Setting -

# -- common --

#wd_monitoring_interfaces_list = ''

# Comma separated list of interfaces names to monitor.

# if any interface from the list is active the watchdog will

# consider the network is fine

# 'any' to enable monitoring on all interfaces except loopback

# '' to disable monitoring

# (change requires restart)

wd_lifecheck_method = 'heartbeat'

# Method of watchdog lifecheck ('heartbeat' or 'query' or 'external')

# (change requires restart)

wd_interval = 10

# lifecheck interval (sec) > 0

# (change requires restart)

# -- heartbeat mode --

#heartbeat_hostname0 = ''

# Host name or IP address used

# for sending heartbeat signal.

# (change requires restart)

#heartbeat_port0 = 9694

# Port number used for receiving/sending heartbeat signal

# Usually this is the same as heartbeat_portX.

# (change requires restart)

#heartbeat_device0 = ''

# Name of NIC device (such like 'eth0')

# used for sending/receiving heartbeat

# signal to/from destination 0.

# This works only when this is not empty

# and pgpool has root privilege.

# (change requires restart)

#heartbeat_hostname1 = ''

#heartbeat_port1 = 9694

#heartbeat_device1 = ''

#heartbeat_hostname2 = ''

#heartbeat_port2 = 9694

#heartbeat_device2 = ''

heartbeat_hostname0 = 'pg62'

heartbeat_port0 = 9694

heartbeat_device0 = ''

heartbeat_hostname1 = 'pg63'

heartbeat_port1 = 9694

heartbeat_device1 = ''

heartbeat_hostname2 = 'pg64'

heartbeat_port2 = 9694

heartbeat_device2 = ''

wd_heartbeat_keepalive = 2

# Interval time of sending heartbeat signal (sec)

# (change requires restart)

wd_heartbeat_deadtime = 30

# Deadtime interval for heartbeat signal (sec)

# (change requires restart)

# -- query mode --

#wd_life_point = 3

# lifecheck retry times

# (change requires restart)

#wd_lifecheck_query = 'SELECT 1'

# lifecheck query to pgpool from watchdog

# (change requires restart)

#wd_lifecheck_dbname = 'template1'

# Database name connected for lifecheck

# (change requires restart)

#wd_lifecheck_user = 'nobody'

# watchdog user monitoring pgpools in lifecheck

# (change requires restart)

#wd_lifecheck_password = ''

# Password for watchdog user in lifecheck

# Leaving it empty will make Pgpool-II to first look for the

# Password in pool_passwd file before using the empty password

# (change requires restart)

#------------------------------------------------------------------------------

# OTHERS

#------------------------------------------------------------------------------

#relcache_expire = 0

# Life time of relation cache in seconds.

# 0 means no cache expiration(the default).

# The relation cache is used for cache the

# query result against PostgreSQL system

# catalog to obtain various information

# including table structures or if it's a

# temporary table or not. The cache is

# maintained in a pgpool child local memory

# and being kept as long as it survives.

# If someone modify the table by using

# ALTER TABLE or some such, the relcache is

# not consistent anymore.

# For this purpose, cache_expiration

# controls the life time of the cache.

#relcache_size = 256

# Number of relation cache

# entry. If you see frequently:

# "pool_search_relcache: cache replacement happened"

# in the pgpool log, you might want to increate this number.

#check_temp_table = catalog

# Temporary table check method. catalog, trace or none.

# Default is catalog.

#check_unlogged_table = on

# If on, enable unlogged table check in SELECT statements.

# This initiates queries against system catalog of primary/main

# thus increases load of primary.

# If you are absolutely sure that your system never uses unlogged tables

# and you want to save access to primary/main, you could turn this off.

# Default is on.

#enable_shared_relcache = on

# If on, relation cache stored in memory cache,

# the cache is shared among child process.

# Default is on.

# (change requires restart)

#relcache_query_target = primary

# Target node to send relcache queries. Default is primary node.

# If load_balance_node is specified, queries will be sent to load balance node.

#------------------------------------------------------------------------------

# IN MEMORY QUERY MEMORY CACHE

#------------------------------------------------------------------------------

#memory_cache_enabled = off

# If on, use the memory cache functionality, off by default

# (change requires restart)

#memqcache_method = 'shmem'

# Cache storage method. either 'shmem'(shared memory) or

# 'memcached'. 'shmem' by default

# (change requires restart)

#memqcache_memcached_host = 'localhost'

# Memcached host name or IP address. Mandatory if

# memqcache_method = 'memcached'.

# Defaults to localhost.

# (change requires restart)

#memqcache_memcached_port = 11211

# Memcached port number. Mondatory if memqcache_method = 'memcached'.

# Defaults to 11211.

# (change requires restart)

#memqcache_total_size = 64MB

# Total memory size in bytes for storing memory cache.

# Mandatory if memqcache_method = 'shmem'.

# Defaults to 64MB.

# (change requires restart)

#memqcache_max_num_cache = 1000000

# Total number of cache entries. Mandatory

# if memqcache_method = 'shmem'.

# Each cache entry consumes 48 bytes on shared memory.

# Defaults to 1,000,000(45.8MB).

# (change requires restart)

#memqcache_expire = 0

# Memory cache entry life time specified in seconds.

# 0 means infinite life time. 0 by default.

# (change requires restart)

#memqcache_auto_cache_invalidation = on

# If on, invalidation of query cache is triggered by corresponding

# DDL/DML/DCL(and memqcache_expire). If off, it is only triggered

# by memqcache_expire. on by default.

# (change requires restart)

#memqcache_maxcache = 400kB

# Maximum SELECT result size in bytes.

# Must be smaller than memqcache_cache_block_size. Defaults to 400KB.

# (change requires restart)

#memqcache_cache_block_size = 1MB

# Cache block size in bytes. Mandatory if memqcache_method = 'shmem'.

# Defaults to 1MB.

# (change requires restart)

#memqcache_oiddir = '/var/log/pgpool/oiddir'

# Temporary work directory to record table oids

# (change requires restart)

#cache_safe_memqcache_table_list = ''

# Comma separated list of table names to memcache

# that don't write to database

# Regexp are accepted

#cache_unsafe_memqcache_table_list = ''

# Comma separated list of table names not to memcache

# that don't write to database

# Regexp are accepted

[root@pg62 log]# pgpool-II启动与关闭

接下来启动 Pgpool-II。启动 Pgpool-II 之前,请先启动 PostgreSQL 服务器。同样,停止 PostgreSQL 时,也必须先停止 Pgpool-II。

[pg62]# su - postgres

[pg62]$ /usr/pgsql-15/bin/pg_ctl start -D $PGDATA

查看状态,未启动则启动数据库

-bash-4.2$ /usr/pgsql-15/bin/pg_ctl status -D $PGDATA

pg_ctl: server is running (PID: 27769)

/usr/pgsql-15/bin/postgres "-D" "/var/lib/pgsql/15/data"

#启动pgpool

# systemctl start pgpool.service

# systemctl stop pgpool.service

- pg62,pg63,pg64

[root@pg62 sudoers.d]# systemctl start pgpool.service

[root@pg62 sudoers.d]#

[root@pg62 sudoers.d]#

[root@pg62 sudoers.d]# systemctl status pgpool.service

● pgpool.service - Pgpool-II

Loaded: loaded (/usr/lib/systemd/system/pgpool.service; disabled; vendor preset: disabled)

Active: active (running) since Thu 2025-12-11 15:09:12 CST; 3s ago

Main PID: 87856 (pgpool)

Tasks: 3

CGroup: /system.slice/pgpool.service

├─87856 /usr/bin/pgpool -f /etc/pgpool-II/pgpool.conf -n

├─87857 pgpool: PgpoolLogger

└─87859 pgpool: watchdog

Dec 11 15:09:12 pg62 systemd[1]: Started Pgpool-II.

Dec 11 15:09:12 pg62 pgpool[87856]: 2025-12-11 15:09:12.738: main pid 8785...st

Dec 11 15:09:12 pg62 pgpool[87856]: 2025-12-11 15:09:12.738: main pid 8785...ss

Dec 11 15:09:12 pg62 pgpool[87856]: 2025-12-11 15:09:12.738: main pid 8785...".

Hint: Some lines were ellipsized, use -l to show in full.

[root@pg62 sudoers.d]#

五、创建测试表

CREATE TABLE customers (

customer_id SERIAL PRIMARY KEY,

name TEXT,

region TEXT,

join_date TIMESTAMP

);

CREATE TABLE products (

product_id SERIAL PRIMARY KEY,

name TEXT,

category TEXT,

price NUMERIC(10,2)

);

-- ========================================

-- Step 2. 批量插入测试数据

-- ========================================

-- 2.1 插入 customers(10万)

DECLARE

b int;

BEGIN

FOR b IN 1..1 LOOP

INSERT INTO customers (name, region, join_date)

SELECT

'Customer_' || (gs + (b-1)*100000),

CASE WHEN random() < 0.33 THEN 'APAC'

WHEN random() < 0.66 THEN 'EMEA'

ELSE 'AMER' END,

now() - make_interval(days => floor(random() * 365)::int)

FROM generate_series(1,100000) AS gs;

RAISE NOTICE 'customers batch % done', b;

END LOOP;

END $$;

-- 2.2 插入 products(10万)

DO $$

DECLARE

b int;

BEGIN

FOR b IN 1..1 LOOP

INSERT INTO products (name, category, price)

SELECT

'Product_' || (gs + (b-1)*100000),

CASE WHEN random() < 0.5 THEN 'Electronics'

WHEN random() < 0.8 THEN 'Clothing'

ELSE 'Food' END,

round((random() * 500 + 1)::numeric, 2)

FROM generate_series(1,100000) AS gs;

RAISE NOTICE 'products batch % done', b;

END LOOP;

END $$;

-- ========================================

-- Step 3. 建立索引

-- ========================================

CREATE INDEX idx_customers_region ON customers(region);

CREATE INDEX idx_products_cat ON products(category);

VACUUM ANALYZE;六、pgpool-II使用

pcp_recovery_node 创建备份服务器

我们需要使用 Pgpool-II 在线恢复功能来设置 PostgreSQL 备用服务器。请确保 pcp_recovery_node 命令使用的 recovery_1st_stage 和 pgpool_remote_start 脚本位于 PostgreSQL 主服务器(pg62)的数据库集群目录中。

执行结果

[root@pg62 pgpool-II]#

[root@pg62 pgpool-II]# pcp_recovery_node -h 192.168.1.62 -p 9898 -U pgpool -n 1

Password:

pcp_recovery_node -- Command Successful

[root@pg62 pgpool-II]#

[root@pg62 pgpool-II]#

[root@pg62 pgpool-II]# pcp_recovery_node -h 192.168.1.62 -p 9898 -U pgpool -n 2

Password:

pcp_recovery_node -- Command Successful

[root@pg62 pgpool-II]# 主库查看复制情况

postgres=# x

Expanded display is on.

postgres=# select * from pg_stat_replication;

-[ RECORD 1 ]----+------------------------------

pid | 108450

usesysid | 16389

usename | repl

application_name | walreceiver

client_addr | 192.168.1.63

client_hostname |

client_port | 30583

backend_start | 2025-12-12 10:26:33.883833+08

backend_xmin |

state | streaming

sent_lsn | 0/9000148 <<此连接上发送的最后一个预写式日志位置

write_lsn | 0/9000148 <<此备用服务器写入磁盘的最后一个预写式日志位置

flush_lsn | 0/9000148 <<此备用服务器刷新到磁盘的最后一个预写式日志位置

replay_lsn | 0/9000148 <<此备用服务器上重放到数据库的最后一个预写式日志位置

write_lag | <<从本地刷新最近的预写式日志到收到此备用服务器已写入该日志(但尚未刷新或应用)的通知之间的时间间隔。如果此服务器配置为同步备用服务器,则可以使用此值来衡量同步提交级别 remote_write 在提交时产生的延迟。

flush_lag | <<从本地刷新最近的 WAL 日志到收到备用服务器已写入并刷新(但尚未应用)该日志的通知之间所经过的时间。如果此服务器配置为同步备用服务器,则可以使用此间隔来衡量 synchronize_commit 级别 on 在提交过程中产生的延迟。

replay_lag | <<从本地刷新最近的 WAL 日志到收到备用服务器已写入、刷新并应用该日志的通知之间所经过的时间。如果此服务器配置为同步备用服务器,则可以使用此间隔来衡量 synchronize_commit 级别 remote_apply 在提交过程中产生的延迟。

sync_priority | 0

sync_state | async

reply_time | 2025-12-12 10:38:58.040977+08

-[ RECORD 2 ]----+------------------------------

pid | 109300

usesysid | 16389

usename | repl

application_name | walreceiver

client_addr | 192.168.1.64

client_hostname |

client_port | 41289

backend_start | 2025-12-12 10:29:36.103449+08

backend_xmin |

state | streaming

sent_lsn | 0/9000148

write_lsn | 0/9000148

flush_lsn | 0/9000148

replay_lsn | 0/9000148

write_lag |

flush_lag |

replay_lag |

sync_priority | 0

sync_state | async

reply_time | 2025-12-12 10:38:57.837526+08查看节点状态

[root@pg62 pgpool-II]# psql -h 192.168.1.65 -p 9999 -U pgpool postgres -c "show pool_nodes"

Password for user pgpool:

LOG: statement: show pool_nodes

LOG: DB node id: 0 backend pid: 27498 statement: SELECT pg_catalog.version()

node_id | hostname | port | status | pg_status | lb_weight | role | pg_role | select_cnt | load_balance_node | replication_delay | replication_state | replication_sync_state | last_status_change

---------+----------+------+--------+-----------+-----------+---------+---------+------------+-------------------+-------------------+-------------------+------------------------+---------------------

0 | pg62 | 5432 | up | up | 0.333333 | primary | primary | 0 | true | 0 | | | 2025-12-12 15:31:36

1 | pg63 | 5432 | up | up | 0.333333 | standby | standby | 0 | false | 0 | | | 2025-12-12 15:31:36

2 | pg64 | 5432 | up | up | 0.333333 | standby | standby | 0 | false | 0 | | | 2025-12-12 15:31:36

(3 rows)负载均衡测试

## 负载均衡测试

vi pgpool.conf

log_statement=all

log_per_node_statement = on

client_min_messages = log

log_min_messages = info

##重启pgpool服务或者reload

systemctl stop pgpool && systemctl start pgpool

or

pgpool reload查看读写操作分发到的节点

[root@pg63 pgpool-II]# psql -h 192.168.1.65 -p 9999 -U postgres wky -c "select * from customers where customer_id =5"

Password for user postgres:

LOG: statement: select * from customers where customer_id =5

LOG: DB node id: 0 backend pid: 29957 statement: SELECT count(*) FROM pg_catalog.pg_class AS c, pg_catalog.pg_namespace AS n WHERE c.relname = 'customers' AND c.relnamespace = n.oid AND n.nspname ~ '^pg_temp_'

>> LOG: DB node id: 2 backend pid: 29598 statement: select * from customers where customer_id =5

customer_id | name | region | join_date

-------------+--------+--------+----------------------------

5 | wky666 | APAC | 2025-07-08 09:34:43.339206

(1 row)

wky=# update customers set name = 'wky666' where customer_id =5;

LOG: statement: update customers set name = 'wky666' where customer_id =5;

LOG: DB node id: 0 backend pid: 30377 statement: update customers set name = 'wky666' where customer_id =5;

UPDATE 1

wky=# commit;

[root@pg62 pgpool-II]# psql -h 192.168.1.65 -p 9999 -U pgpool postgres -c "show pool_nodes"

Password for user pgpool:

LOG: statement: show pool_nodes

LOG: DB node id: 0 backend pid: 27498 statement: SELECT pg_catalog.version()

node_id | hostname | port | status | pg_status | lb_weight | role | pg_role | select_cnt | load_balance_node | replication_delay | replication_state | replication_sync_state | last_status_change

---------+----------+------+--------+-----------+-----------+---------+---------+------------+-------------------+-------------------+-------------------+------------------------+---------------------

0 | pg62 | 5432 | up | up | 0.333333 | primary | primary | 2 | true | 0 | | | 2025-12-12 15:31:36

1 | pg63 | 5432 | up | up | 0.333333 | standby | standby | 2 | false | 0 | | | 2025-12-12 15:31:36

2 | pg64 | 5432 | up | up | 0.333333 | standby | standby | 1 | false | 0 | | | 2025-12-12 15:31:36

(3 rows)Failover故障转移

在服务器pg62上停止 PostgreSQL 后,发生故障转移,服务器pg63上的 PostgreSQL 成为新的主数据库。

[root@pg63 bin]# psql -h 192.168.1.65 -p 9999 -U pgpool postgres -c "show pool_nodes"

Password for user pgpool:

LOG: statement: show pool_nodes

LOG: DB node id: 1 backend pid: 56622 statement: SELECT pg_catalog.version()

node_id | hostname | port | status | pg_status | lb_weight | role | pg_role | select_cnt | load_balance_node | replication_delay | replication_state | replication_sync_state | last_status_change