InstantStyle.

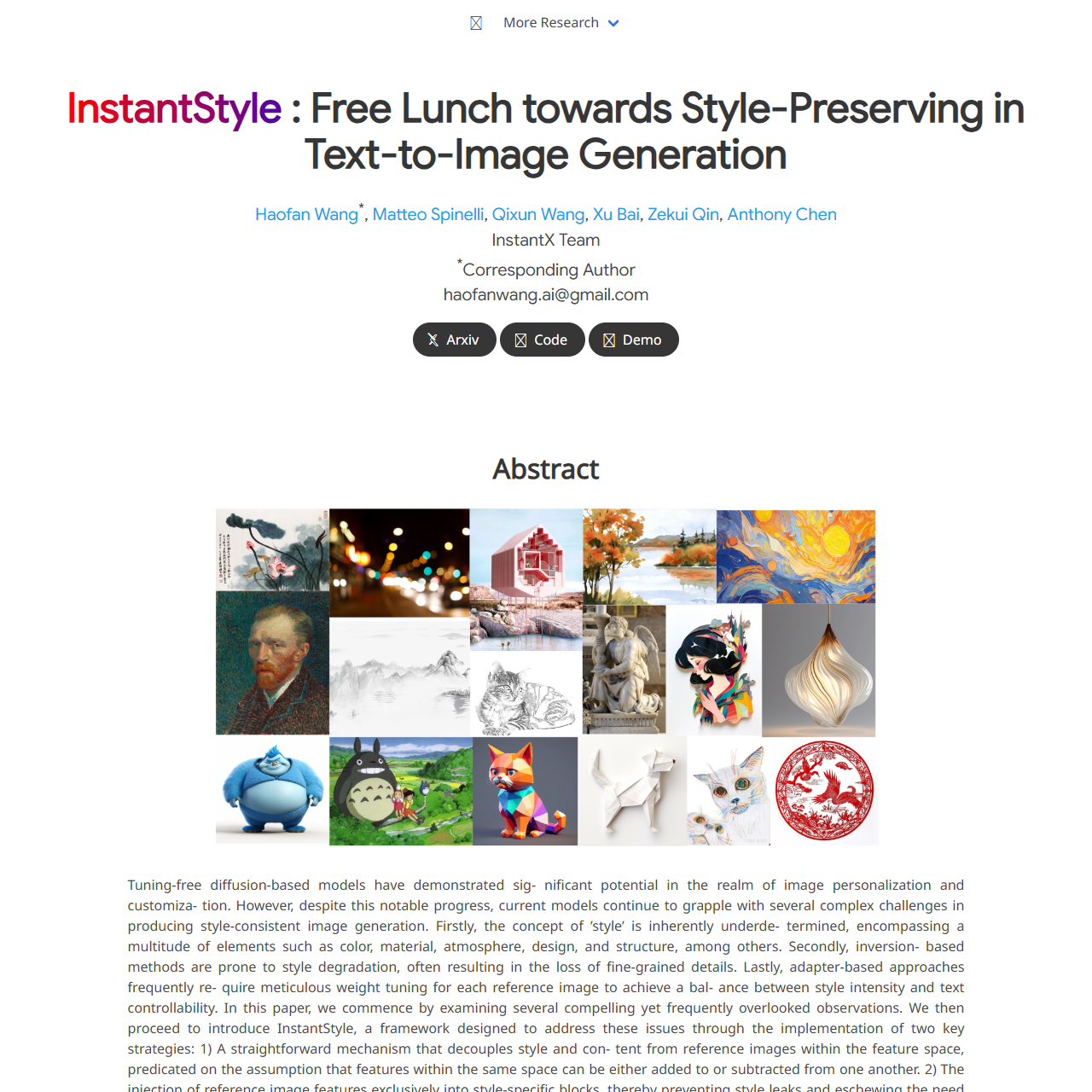

Tuning-free diffusion-based models have demonstrated sig- nificant potential in the realm of image personalization and customiza- tion. However, despite this notable progress, current models continue to grapple with several complex challenges in producing style-consistent image generation. Firstly, the concept of ’style’ is inherently underde- termined, encompassing a multitude of elements such as color, material, atmosphere, design, and structure, among others. Secondly, inversion- based methods are prone to style degradation, often resulting in the loss of fine-grained details. Lastly, adapter-based approaches frequently re- quire meticulous weight tuning for each reference image to achieve a bal- ance between style intensity and text controllability. In this paper, we commence by examining several compelling yet frequently overlooked observations. We then proceed to introduce InstantStyle, a framework designed to address these issues through the implementation of two key strategies: 1) A straightforward mechanism that decouples style and con- tent from reference images within the feature space, predicated on the assumption that features within the same space can be either added to or subtracted from one another. 2) The injection of reference image features exclusively into style-specific blocks, thereby preventing style leaks and eschewing the need for cumbersome weight tuning, which often charac- terizes more parameter-heavy designs.Our work demonstrates superior visual stylization outcomes, striking an optimal balance between the in- tensity of style and the controllability of textual elements.

Injecting into Style Blocks Only. Empirically, each layer of a deep network captures different semantic information the key observation in our work is that there exists two specific attention layers handling style. Specifically, we find up blocks.0.attentions.1 and down blocks.2.attentions.1 capture style (color, material, atmosphere) and spatial layout (structure, composition) respectively. We can use them to implicitly extract style information, further preventing content leakage without losing the strength of the style. The idea is straightforward, as we have located style blocks, we can inject our image features into these blocks only to achieve style transfer seamlessly. Furthermore, since the number of parameters of the adapter is greatly reduced, the text control ability is also enhanced. This mechanism is applicable to other attention-based feature injection for editing or other tasks.

数据统计

数据评估

关于InstantStyle特别声明

本站鸟瑞导航提供的InstantStyle数据都来源于网络,不保证外部链接的准确性和完整性,同时,对于该外部链接的指向,不由鸟瑞导航实际控制,在2025年9月10日 下午7:02收录时,该网页上的内容,都属于合法合规,后期网页的内容如出现违规,请联系本站网站管理员进行举报,我们将进行删除,鸟瑞导航不承担任何责任。

相关导航

HITEMS is an AI-based design platform that provides one-click product generation and delivery solutions. We are committed to helping everyone bring their creativity to life and making design simple and accessible.Whether it’s a personal project or a brand concept, HITEMS ensures that every idea has the opportunity to become a reality

Artefacts

Artefacts is a 3D AI toolkit that enables users to effortlessly transform text or 2D images into 3D assets. Unleash your creativity with Artefacts - the future of 3D content creation.

Nvidia·GET3D

NVIDIA 发明了 GPU,并推动了 AI、HPC、游戏、创意设计、自动驾驶汽车和机器人开发领域的进步。

OneStory

OneStory 是一款创新的AI故事生成助手,用AI快速生成连续性、一致性的角色和故事。

Skybox AI

Skybox AI: One-click 360° image generator from Blockade Labs

Hyper3d.

AI-3D生成--Create professional 3...

搜狐简单AI

AI时代必备的全能AI工具,为用户提供全方位AI服务,如AI绘图、AI写作、AI在线图片处理。提供海量图片制作设计模板:电商图,logo设计,证件照,智能抠图,图片高清修复,一键去水印,一键换背景等各种应用场景。新手小白也能轻松玩转AI。

Objaverse

Objaverse 1.0 is a Massive Dataset of 800K+ Annotated 3D Objects

暂无评论...